Edge Deployment of HY-MT1.5-1.8B

Introduction

HY-MT1.5-1.8B is the 1.5 version of the Hunyuan translation model produced by Tencent. As an upgraded version of the WMT25 championship model, it is optimized for explanatory translation and mixed-language scenarios, with newly added support for terminology intervention, contextual translation, and formatted translation. Despite having less than one-third the parameters of HY-MT1.5-7B, HY-MT1.5-1.8B delivers translation performance comparable to its larger counterpart, achieving both high speed and high quality. After quantization, the 1.8B model can be deployed on edge devices and support real-time translation scenarios, making it widely applicable.

This chapter demonstrates how to complete the deployment, loading, and translation workflow of HY-MT1.5-1.8B on edge devices. Two deployment methods are provided:

- AidGen C++ API

- AidGenSE OpenAI API

In this case, the Large Language Model (LLM) inference runs on the device side. It receives user input and returns conversation results in real-time through code calling relevant interfaces.

- Device: Rhino Pi-X1

- System: Ubuntu 22.04

- Model: HY-MT1.5-1.8B

Supported Platforms

| Platform | Execution Method |

|---|---|

| Rhino Pi-X1 | Ubuntu 22.04, AidLux |

Prerequisites

- Rhino Pi-X1 hardware.

- Ubuntu 22.04 system or AidLux system.

AidGen Deployment

Step 1: Install AidGen SDK

# Install AidGen SDK

sudo aid-pkg update

sudo aid-pkg -i aidgen-sdk

# Copy test code

cd /home/aidlux

cp -r /usr/local/share/aidgen/examples/cpp/aidllm .Step 2: Download Model Resources

Since HY-MT1.5-1.8B is currently in the Model Farm preview section, it must be obtained via the

mmscommand.

# Login

mms login

# Search for the model

mms list HY

# Download the model

mms get -m HY-MT1.5-1.8B -p w4a16 -c qcs8550 -b qnn2.36 -d /home/aidlux/aidllm

cd /home/aidlux/aidllm

unzip qnn236_qcs8550_cl2048.zip

mv qnn236_qcs8550_cl2048/* .Step 3: Create Configuration File

cd /home/aidlux/aidllm

vim hy-mt-aidgen-config.jsonCreate the following json configuration file:

{

"backend_type": "genie",

"prefix_path": "kv-cache.primary.qnn-htp",

"model": {

"path": [

"hy-mt1.5-1.8b_qnn236_qcs8550_cl2048_1_of_2.serialized.bin.aidem",

"hy-mt1.5-1.8b_qnn236_qcs8550_cl2048_2_of_2.serialized.bin.aidem"

]

}

}Step 4: Verify Resource Files

The file distribution should be as follows:

/home/aidlux/aidllm

├── CMakeLists.txt

├── test_prompt_abort.cpp

├── test_prompt_serial.cpp

├── aidgen_chat_template.txt

├── chat.txt

├── htp_backend_ext_config.json

├── hy-mt1.5-1.8b-htp.json

├── hy-mt-aidgen-config.json

├── kv-cache.primary.qnn-htp

├── hy-mt1.5-1.8b-tokenizer.json

├── hy-mt1.5-1.8b_qnn236_qcs8550_cl2048_1_of_2.serialized.bin.aidem

└── hy-mt1.5-1.8b_qnn236_qcs8550_cl2048_2_of_2.serialized.bin.aidemStep 5: Set Conversation Template

💡Note

Please refer to the aidgen_chat_template.txt file in the model resource package for the conversation template.

Modify the test_prompt_serial.cpp file according to the LLM template:

// test_prompt_serial.cpp

// ...

// lines 43-47

std::string prompt_template_type = "hy-mt";

if(prompt_template_type == "hy-mt"){

prompt_template = "<|hy_begin▁of▁sentence|><|hy_place▁holder▁no▁3|>\n<|hy_begin▁of▁sentence|>\n<|hy_User|>Translate the following segment into Chinese, without additional explanation.\n\n{0}\n<|hy_Assistant|>";

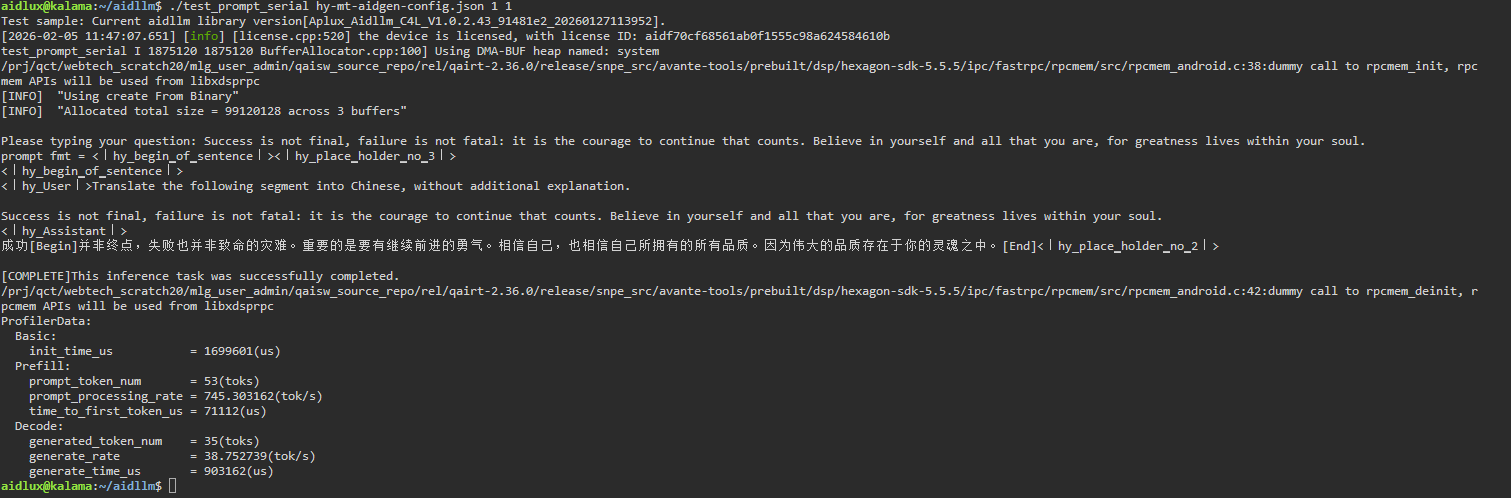

}Step 6: Compilation and Execution

# Install dependencies

sudo apt update

sudo apt install libfmt-dev

# Compile

mkdir build && cd build

cmake .. && make

# Run after successful compilation

# The first parameter `1` enables profiler statistics

# The second parameter `1` specifies the number of inference iterations

mv test_prompt_serial /home/aidlux/aidllm/

cd /home/aidlux/aidllm/

./test_prompt_serial hy-mt-aidgen-config.json 1 1- Enter the sentence to be translated in the terminal.

💡Note

According to the conversation template settings in Step 5, the model is set for translation; it will automatically translate other languages into Chinese upon input.

AidGenSE Deployment

Step 1: Install AidGenSE

sudo aid-pkg update

# Ensure that aidgense is the latest version.

sudo aid-pkg remove aidgense

sudo aid-pkg -i aidgenseStep 2: Model Query & Acquisition

# Check available models

aidllm remote-list api | grep HY

#------------------------ HY-MT1.5-1.8B model can be seen ------------------------

Current Soc : 8550

Name Url CreateTime

----- --------- ---------

HY-MT1.5-1.8B-8550 aplux/HY-MT1.5-1.8B-8550 2026-02-04 13:48:59

...

# Download HY-MT1.5-1.8B

aidllm pull api aplux/HY-MT1.5-1.8B-8550Step 3: Start HTTP Service

# Start the OpenAI API service for the corresponding model

aidllm start api -m HY-MT1.5-1.8B-8550

# Check status

aidllm status api

# Stop service: aidllm stop api

# Restart service: aidllm restart api💡Note

The default port number is 8888.

Step 4: Chat Test

Chat Test via Web UI

# Install UI frontend service

sudo aidllm install ui

# Start UI service

aidllm start ui

# Check UI service status: aidllm status ui

# Stop UI service: aidllm stop uiAfter the UI service starts, visit http://ip:51104

Please use the following conversation template for translation:

Translate the following segment into Chinese, without additional explanation.

Success is not final, failure is not fatal: it is the courage to continue that counts. Believe in yourself and all that you are, for greatness lives within your soul.Chat Test via Python

import os

import requests

import json

def stream_chat_completion(messages, model="HY-MT1.5-1.8B-8550"):

url = "http://127.0.0.1:8888/v1/chat/completions"

headers = {

"Content-Type": "application/json"

}

payload = {

"model": model,

"messages": messages,

"stream": True # Enable streaming

}

# Initiate request with stream=True

response = requests.post(url, headers=headers, json=payload, stream=True)

response.raise_for_status()

# Read line by line and parse SSE format

for line in response.iter_lines():

if not line:

continue

# print(line)

line_data = line.decode('utf-8')

# SSE each line starts with "data: " prefix

if line_data.startswith("data: "):

data = line_data[len("data: "):]

# End flag

if data.strip() == "[DONE]":

break

try:

chunk = json.loads(data)

except json.JSONDecodeError:

# Print and skip on parsing error

print("Unable to parse JSON:", data)

continue

# Extract the token output by the model

content = chunk["choices"][0]["delta"].get("content")

if content:

print(content, end="", flush=True)

if __name__ == "__main__":

# Example conversation

messages = [

{"role": "system", "content": ""},

{"role": "user", "content": "Translate the following segment into Chinese, without additional explanation.\n\nSuccess is not final, failure is not fatal: it is the courage to continue that counts. Believe in yourself and all that you are, for greatness lives within your soul."}

]

print("Assistant:", end=" ")

stream_chat_completion(messages)

print() # New line