Quick Start with Web

This section introduces how to use the Model Farm Web version to quickly evaluate models and run examples.

Hardware Preparation

The models provided by Model Farm are optimized for Qualcomm Dragonwing IoT chip platforms and have undergone performance testing on development boards featuring Qualcomm silicon. Model Farm currently supports the following Qualcomm chip models:

- Qualcomm QCS6490

- Qualcomm QCS8550

- Qualcomm QCS8625

- Qualcomm Dragonwing™ IQ9

- Qualcomm Dragonwing™ IQ8

Prepare a Developer Account

Developers can browse model information and performance metrics on Model Farm without logging in.

However, a developer account is required to download models and sample code.

- Register as an Aplux Developer

- Please visit: Developer Account Registration

- Follow the registration form prompts and requirements to fill in your developer information.

- After ensuring the information is correct, submit your account creation request.

Log in to Model Farm

Visit the website Model Farm

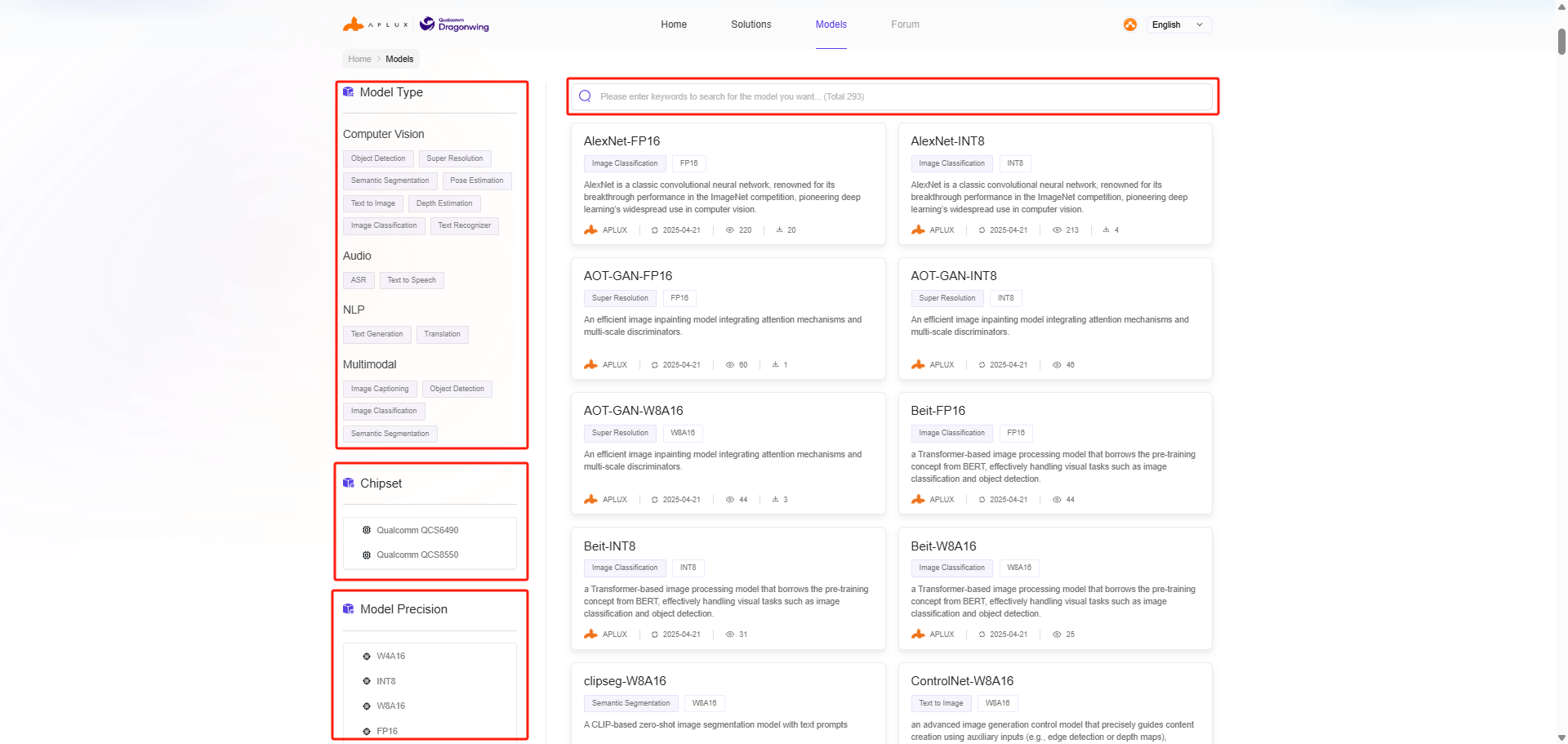

Search for Models

Developers can search for models on Model Farm based on their requirements to view detailed specifications and perform quick evaluations.

Access the Model Farm website via a browser to browse and view model details.

Model Farm provides multiple ways to filter and find models:

- Filter by model type

- Filter by model data precision

- Filter by chip platform

- Keyword search

View Model Details

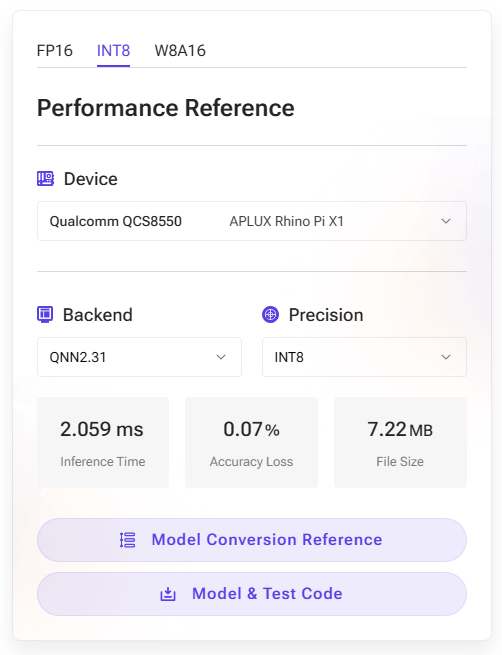

The model details page on Model Farm provides actual measured performance of AI models with different quantization precisions on corresponding hardware.

- Device: The development board and corresponding chip model used for the actual performance measurement.

- AI Framework: The framework and version number used for model conversion and inference.

- Model Data Precision: The data precision used by the converted model.

- Inference Latency: The actual measured time for model execution, excluding pre- and post-processing.

- Accuracy Loss: The cosine similarity of the output matrix between the source model (FP32) and the converted model.

- Model Size: The file size of the converted model.

💡Note

For the same SoC, model performance may vary across different hardware specifications; these figures should be used as a reference only.

Example of YOLOv5s on Rhino Pi-X1 (Qualcomm QCS8550):

Download Models

Click the corresponding button in the Performance Reference section of the model details page to download the model and code package.

💡Note

For models in the Preview section (where the button displays: Contact Us), direct web downloads are not available. These must be downloaded using the MMS tool on an Aplux development board. For details, please refer to: Quick Start (MMS)

Model Testing

Models downloaded via the Model Farm web version can be tested for inference using the following two methods:

Using APLUX AidLite for Inference

Aplux provides the AidLite AI Inference Framework, which is used to invoke the Qualcomm NPU on edge devices for AI model inference.

Model Farm supports the models and ensures they can perform inference via the AidLite SDK. Additionally, Model Farm provides pre- and post-processing code for these models to ensure developers can quickly verify model results.

By following the Download Models steps, developers will obtain a complete package containing the model file and inference code. The file structure is as follows (using YOLOv5s as an example):

/model_farm_yolov5s

|__ models # Model files

|__ python # Python-based inference code

|__ cpp # C++-based inference code

|__ README.md # Model specifications & software dependency installation guideFor a specific example, please refer to: YOLOv5 Deployment

💡Note

For models in the Preview section (where the button displays: Contact Us), inference can only be performed using the AidLite SDK on an Aplux development board.

Using Qualcomm QNN for Inference

Please refer to the Qualcomm QNN Documentation

Advanced Usage: Converting and Testing Fine-tuned Models

Aplux provides the AIMO Model Optimization Platform to convert models into formats exclusive to the Qualcomm platform.

Most models supported by Model Farm can be converted using AIMO. Consequently, Model Farm provides not only the optimized model files but also the reference conversion steps for using AIMO.

AIMO model conversion reference steps can be found in two places:

- On the Performance Reference module on the right side of the model details page; click Model Conversion Reference to access it.

- In the Model Conversion Reference section of the

README.mdfile within the code package.

For an introduction and usage guide for AIMO, please refer to: AIMO Model Optimization Platform User Guide

Developers can simply replace the model in the Model Farm example with the .amf model file output by AIMO.