AidGen SDK Development Documentation

Introduction

AidGen is an inference framework specifically designed for generative Transformer models, built on top of AidLite. It aims to fully utilize various computing units of hardware (CPU, GPU, NPU) to achieve inference acceleration for large models on edge devices.

AidGen is an SDK-level development kit that provides atomic-level large model inference interfaces, suitable for developers to integrate large model inference into their applications.

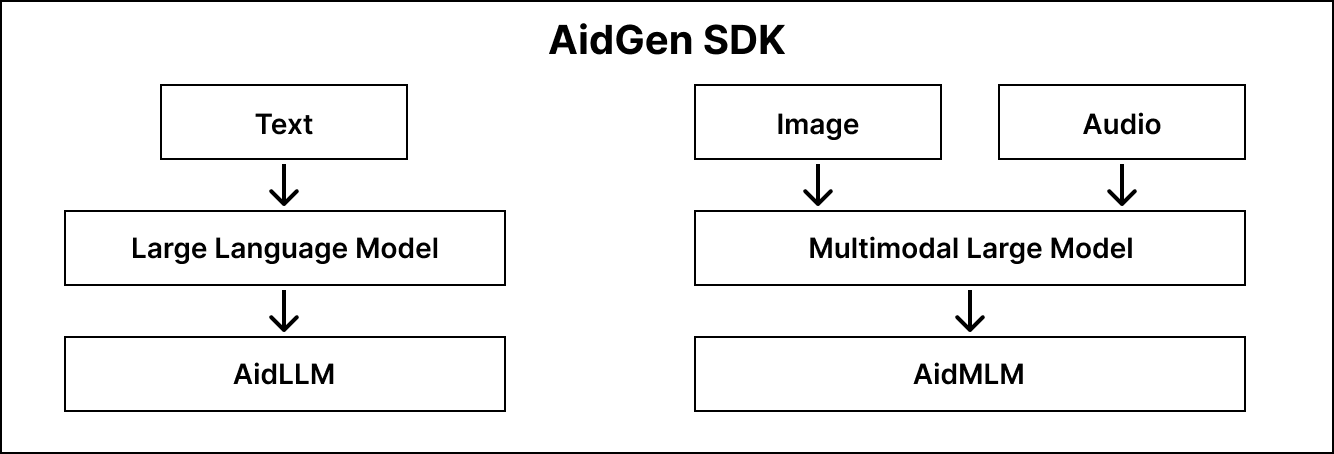

AidGen supports multiple types of generative AI models:

- Language large models -> AidLLM inference

- Multimodal large models -> AidMLM inference

The structure is shown in the diagram below:

💡Note

All large models supported by Model Farm achieve inference acceleration on Qualcomm chip NPUs through AidGen.

Support Status

Model Type Support Status

| AidLLM | AidMLM | |

|---|---|---|

| Text | ✅ | / |

| Image | / | ✅ |

| Audio | / | 🚧 |

✅: Supported 🚧: Planned support

Operating System Support Status

| Linux | AidLux | Android | |

|---|---|---|---|

| C++ | ✅ | ✅ | / |

| Python | 🚧 | 🚧 | / |

| Java | / | / | 🚧 |

✅: Supported 🚧: Planned support

Large Language Model AidLLM SDK

Installation

sudo aid-pkg update

sudo aid-pkg -i aidgen-sdk

# copy test code

cd /home/aidlux

cp -r /usr/local/share/aidgen/examples/cpp/aidllm .Model File Acquisition

- Model files and default configuration files can be downloaded directly through the Model Farm Large Model Section

- Retrieve and Download Models via

mms(Using Qwen2.5-0.5B-Instruct as an example)

# Log in

mms login

# Search for the model

mms list Qwen2.5-0.5B-Instruct

# Download the model

# Parameters:

# -m: Model Name, -p: Precision (w4a16), -c: Chipset (qcs8550)

# -b: Backend/QNN Version (qnn2.29), -d: Destination Directory

mms get -m Qwen2.5-0.5B-Instruct -p w4a16 -c qcs8550 -b qnn2.29 -d /home/aidlux/aidllm/qwen2.5-0.5b-instruct

cd /home/aidlux/aidllm/qwen2.5-0.5b-instruct

unzip qnn229_qcs8550_cl2048

mv qnn229_qcs8550_cl2048/* /home/aidlux/aidllm/Model Performance Monitoring

💡Note

Please ensure that the sample application can be executed successfully in its entirety.

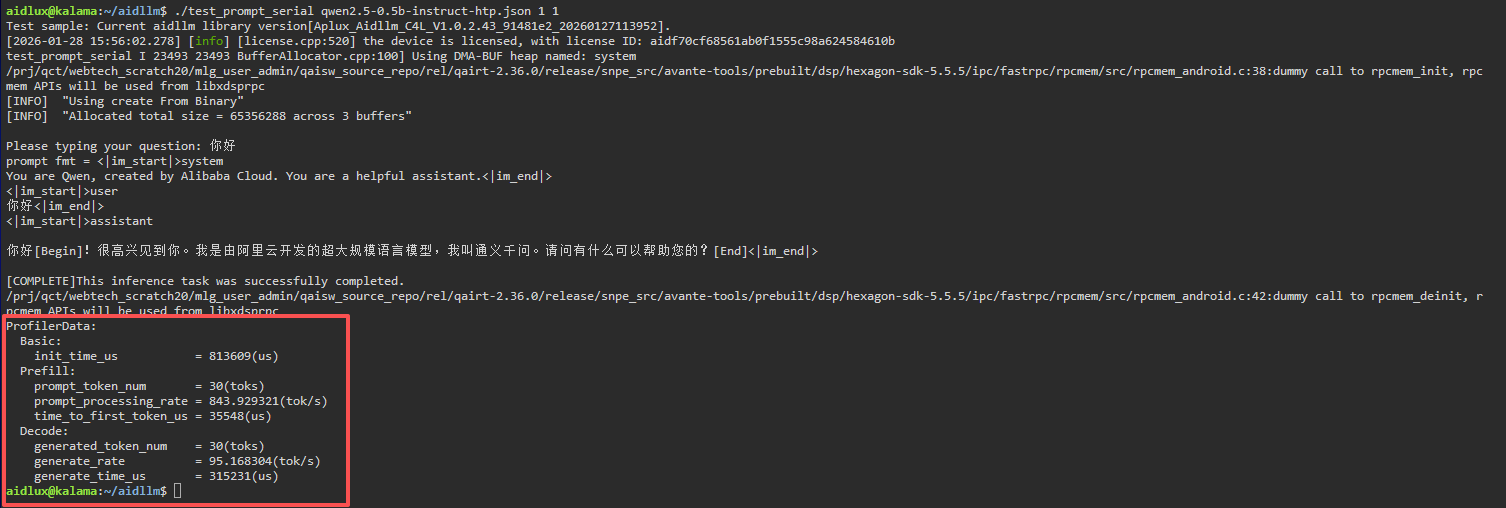

Taking the example Deploying Qwen2.5-0.5B-Instruct on Qualcomm QCS8550

# Install dependencies

sudo apt update

sudo apt install libfmt-dev

# Compile

mkdir build && cd build

cmake .. && make

mv test_prompt_serial /home/aidlux/aidllm/

# Run after successful compilation

# The first parameter `1` enables profiler statistics

# The second parameter `1` specifies the number of inference iterations

cd /home/aidlux/aidllm/

./test_prompt_serial qwen2.5-0.5b-instruct-htp.json 1 1- After entering the conversation content in the terminal, you will see the following log information:

Example

- Deploying Qwen2.5-0.5B-Instruct on Qualcomm QCS8550

- Deploying Qwen3 Series on Qualcomm QCS8550

- Deploying HY-MT1.5-1.8B on Qualcomm QCS8550

Multi-modal Vision Model AidMLM SDK

Model Support Status

| Model | Status |

|---|---|

| Qwen2.5-VL-3B-Instruct | ✅ |

| Qwen2.5-VL-7B-Instruct | ✅ |

| InternVL3-2B | 🚧 |

| Qwen3-VL-4b | 🚧 |

| Qwen3-VL-2b | 🚧 |

Installation

sudo aid-pkg update

sudo aid-pkg -i aidgen-sdk

# copy test code

cd /home/aidlux

cp -r /usr/local/share/aidgen/examples/cpp/aidmlm ./Model File Acquisition

Acquire and download models directly via command line.

mms login

mms list Qwen2.5-VL-3B

mms get -m Qwen2.5-VL-3B-Instruct_392x392_ -p w4a16 -c qcs8550 -b qnn2.36 -d /home/aidlux/aidmlm/qwen2.5-vl-3b-392

cd /home/aidlux/aidmlm/qwen2.5-vl-3b-392

unzip qnn236_qcs8550_cl2048

mv qnn236_qcs8550_cl2048/* /home/aidlux/aidmlm/Create Configuration File

cd /home/aidlux/aidmlm

vim config3b_392.jsonCreate the following json configuration file:

{

"vision_model_path":"veg.serialized.bin.aidem",

"pos_embed_cos_path":"position_ids_cos.raw",

"pos_embed_sin_path":"position_ids_sin.raw",

"vocab_embed_path":"embedding_weights_151936x2048.raw",

"window_attention_mask_path":"window_attention_mask.raw",

"full_attention_mask_path":"full_attention_mask.raw",

"llm_path_list":[

"qwen2p5-vl-3b_qnn236_qcs8550_cl2048_1_of_1.serialized.bin.aidem"

]

}The file distribution is as follows:

/home/aidlux/aidmlm

├── CMakeLists.txt

├── test_qwen25vl_abort.cpp

├── test_qwen25vl.cpp

├── demo.jpg

├── embedding_weights_151936x2048.raw

├── full_attention_mask.raw

├── position_ids_cos.raw

├── position_ids_sin.raw

├── qwen2p5-vl-3b_qnn236_qcs8550_cl2048_1_of_1.serialized.bin.aidem

├── veg.serialized.bin.aidem

├── window_attention_mask.rawModel Performance Monitoring

💡Note

Please ensure that the sample application can be executed successfully in its entirety.

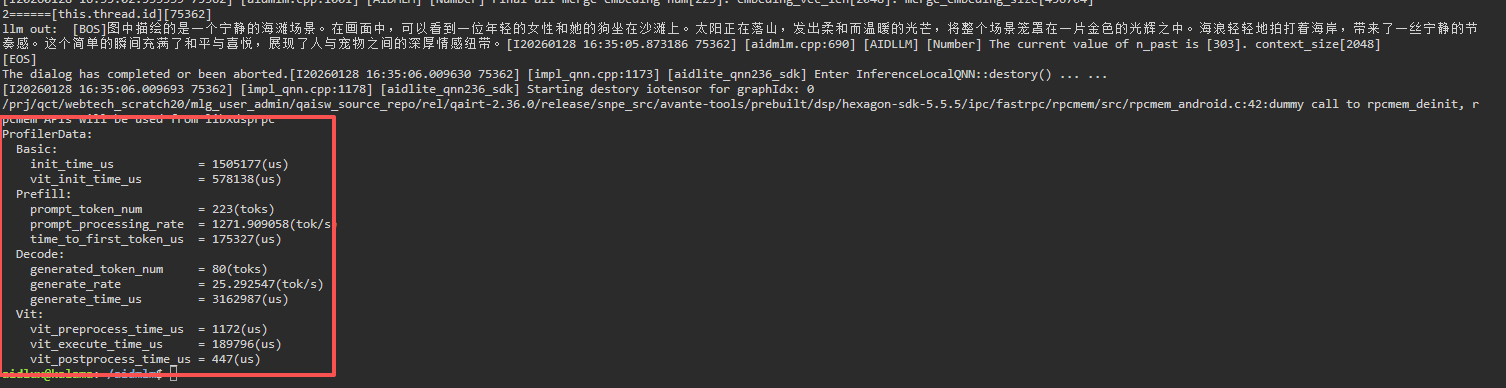

Taking the example Deploying Qwen2.5-VL-3B-Instruct (392x392) on Qualcomm QCS8550

sudo apt update

sudo apt-get install libfmt-dev nlohmann-json3-dev

mkdir build && cd build

cmake .. && make

# Run after successful compilation

# The first parameter `1` enables profiler statistics

mv test_qwen25vl /home/aidlux/aidmlm/

cd /home/aidlux/aidmlm/

./test_qwen25vl "qwen25vl3b392" "config3b_392.json" "demo.jpg" "Please describe the scene in the picture" 1- After entering the conversation content in the terminal, you will see the following log information:

Example

Modifying Inference Parameters

AidGen supports dynamic modification of parameters related to model inference. Currently supported parameters include:

- temp

- top-k

- top-p

Refer to the following code in the test_prompt_abort.cpp file to modify parameters:

int ret = llm_interpreter_ptr->set_sampler("temp", "0.5");

int ret = llm_interpreter_ptr->set_sampler("top-p", "0.8");

int ret = llm_interpreter_ptr->set_sampler("top-k", "20");