Deploy VLM with AidGen

Introduction

Edge deployment of Vision Language Models (VLM) refers to the process of compressing, quantizing, and deploying large models that originally ran in the cloud onto local devices. This enables offline, low-latency natural language understanding and generation. Based on the AidGen inference engine, this chapter demonstrates the deployment, loading, and conversation workflow of multimodal large models on edge devices.

In this case, the multimodal large model inference runs on the device side. C++ code is used to call relevant interfaces to receive user input and return dialogue results in real-time.

- Device: IQ9075

- System: Ubuntu 24.04

- Model: Qwen2.5-VL-3B (392x392)

Supported Platforms

| Platform | Operation Mode |

|---|---|

| IQ9075 | Ubuntu 24.04 |

Prerequisites

- IQ9075 Hardware

- Ubuntu 24.04 System

System Dependency Configuration

Configure AidLux Dependency Sources

# Download the correct public key

sudo wget -O- https://archive.aidlux.com/ubuntu24/public.key | gpg --dearmor | sudo tee /etc/apt/trusted.gpg.d/private-aidlux.gpg > /dev/null

# Edit the source file

sudo vim /etc/apt/sources.list.d/private-aidlux.list

# Fill in the private key provided by AidLux in the source file

deb [arch=arm64 signed-by=/etc/apt/trusted.gpg.d/private-aidlux.gpg] https://archive.aidlux.com/ubuntu24 noble main

# Update cache

sudo apt updateOnce the update is complete, you can obtain the official AidLux SDK dependencies using the following command:

sudo apt list | grep aid | grep unknown# Install software

# Essential dependencies (not included in the system by default)

sudo apt install python3 python3-pip libopencv-dev python3-opencv net-tools

# Must be installed before aidlite

sudo apt install aidlux-aistack-base aidrtcm

# Install aidlite and dependencies

sudo apt install aid-lms aidlms-sdk aidlite-sdk cmake

sudo apt-get install libfmt-dev nlohmann-json3-dev

sudo apt install aidlite-*

# DSP Support

sudo apt-get install qcom-fastrpc1

sudo apt-get install qcom-fastrpc-dev

# Install aidgen-sdk

sudo apt install aidgen-qnn240-sdk

# Install mms service

sudo apt install aid-mms

# GPU Support

sudo apt-add-repository -s ppa:ubuntu-qcom-iot/qcom-ppa

sudo apt install qcom-adreno-cl1

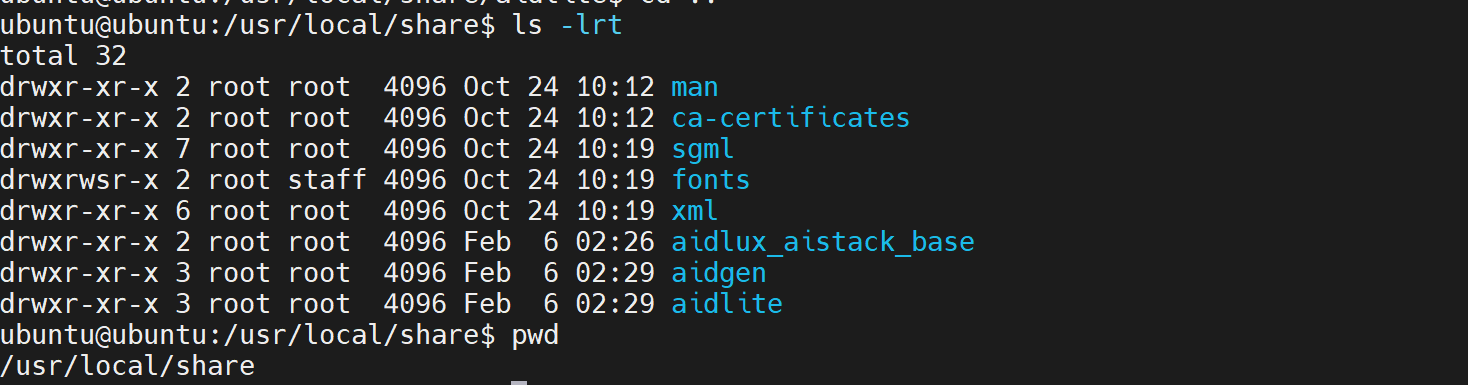

sudo ln -s /usr/lib/aarch64-linux-gnu/libOpenCL.so.1 /usr/lib/aarch64-linux-gnu/libOpenCL.soAfter installation, verify that the aidlite and aidgen directories have been added to /usr/local/share.

Device Authorization

Obtain Device SN Code

cat /sys/devices/soc0/serial_numberObtain Authorization File

Provide the SN to AidLux technical personnel to generate a device-specific License file, and place it in the directory /etc/opt/aidlux/license/AidLuxLics.

Activate Authorization

sudo /opt/aidlux/cpf/aid-lms/manager.sh restartDeployment

Step 1: Copy AidGen SDK Code Examples

# Copy test code

cd /home/ubuntu

cp -r /usr/local/share/aidgen/examples/cpp/aidmlm ./Step 2: Model Acquisition

IMPORTANT

Since Qwen2.5-VL-3B (392x392) is currently in the Model Farm preview section, it must be obtained via the mms command.

Using mms requires a Model Farm account login. Please visit Model Farm Account Registration.

# Login

mms login

# Search for model

mms list Qwen2.5-VL-3B

# Download model

mms get -m Qwen2.5-VL-3B-Instruct_392x392_ -p w4a16 -c qcs8550 -b qnn2.36 -d /home/ubuntu/aidmlm/qwen2.5-vl-3b-392

cd /home/ubuntu/aidmlm/qwen2.5-vl-3b-392

unzip qnn236_qcs8550_cl2048

mv qnn236_qcs8550_cl2048/* /home/ubuntu/aidmlm/Step 3: Create Configuration File

cd /home/ubuntu/aidmlm

vim config3b_392.jsonCreate the following json configuration file:

{

"vision_model_path":"veg.serialized.bin.aidem",

"pos_embed_cos_path":"position_ids_cos.raw",

"pos_embed_sin_path":"position_ids_sin.raw",

"vocab_embed_path":"embedding_weights_151936x2048.raw",

"window_attention_mask_path":"window_attention_mask.raw",

"full_attention_mask_path":"full_attention_mask.raw",

"llm_path_list":[

"qwen2p5-vl-3b-qnn231-qcs8550-cl2048_1_of_6.serialized.bin.aidem",

"qwen2p5-vl-3b-qnn231-qcs8550-cl2048_2_of_6.serialized.bin.aidem",

"qwen2p5-vl-3b-qnn231-qcs8550-cl2048_3_of_6.serialized.bin.aidem",

"qwen2p5-vl-3b-qnn231-qcs8550-cl2048_4_of_6.serialized.bin.aidem",

"qwen2p5-vl-3b-qnn231-qcs8550-cl2048_5_of_6.serialized.bin.aidem",

"qwen2p5-vl-3b-qnn231-qcs8550-cl2048_6_of_6.serialized.bin.aidem"

]

}The file structure should be as follows:

/home/ubuntu/aidmlm

├── CMakeLists.txt

├── test_qwen25vl_abort.cpp

├── test_qwen25vl.cpp

├── demo.jpg

├── embedding_weights_151936x2048.raw

├── full_attention_mask.raw

├── position_ids_cos.raw

├── position_ids_sin.raw

├── qwen2p5-vl-3b-qnn231-qcs8550-cl2048_1_of_6.serialized.bin.aidem

├── ... (other LLM parts)

├── veg.serialized.bin.aidem

├── window_attention_mask.rawStep 4: Compile and Run

# Update and install build dependencies

sudo apt update

sudo apt-get install libfmt-dev nlohmann-json3-dev

# Compile

mkdir build && cd build

cmake .. && make

mv test_qwen25vl /home/ubuntu/aidmlm/

# Run after successful compilation

cd /home/ubuntu/aidmlm/

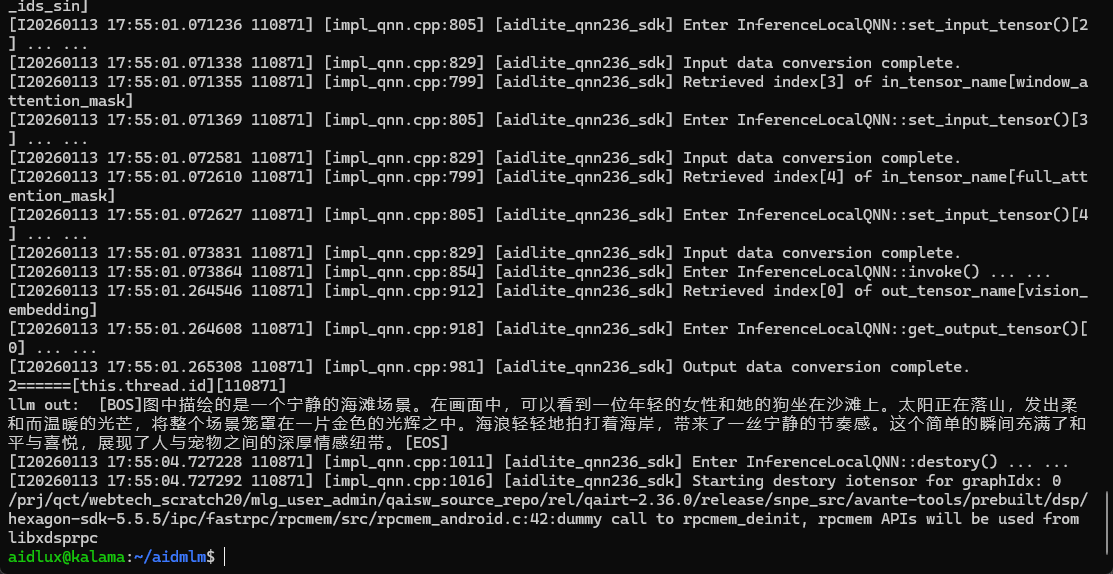

./test_qwen25vl "qwen25vl3b392" "config3b_392.json" "demo.jpg" "Please describe the scene in the image."The test_qwen25vl.cpp test code defines model_type for the first argument of the execution. The currently supported types are:

| Model | Type |

|---|---|

| Qwen2.5-VL-3B (392X392) | qwen25vl3b392 |

| Qwen2.5-VL-3B (672X672) | qwen25vl3b672 |

| Qwen2.5-VL-7B (392X392) | qwen25vl7b392 |

| Qwen2.5-VL-7B (672X672) | qwen25vl7b672 |

💡Note

When downloading different models, you must set the corresponding model_type. For example, if you download the Qwen2.5-VL-7B (672X672) model, use model_type = "qwen25vl7b672".

- Execution result: