Edge Deployment of HY-MT1.5-1.8B

Introduction

HY-MT1.5-1.8B is the 1.5 version of the Hunyuan translation model produced by Tencent. This model is an upgraded version of the WMT25 champion model, optimized for explanatory translation and mixed-language scenarios, and adds support for terminology intervention, contextual translation, and formatted translation. Although the parameter count of HY-MT1.5-1.8B is less than one-third of HY-MT1.5-7B, its translation performance is comparable to larger models, balancing high speed with high quality. After quantization, the 1.8B model can be deployed on edge devices to support real-time translation scenarios, offering broad application prospects.

This chapter will demonstrate how to complete the deployment, loading, and translation process of HY-MT1.5-1.8B on edge devices. Two deployment methods are provided:

- AidGen C++ API

- AidGenSE OpenAI API

In this case, the Large Language Model (LLM) inference runs on the device side, receiving user input and returning conversation results in real-time through code calling relevant interfaces.

- Device: IQ9075

- System: Ubuntu 24.04

- Model: HY-MT1.5-1.8B

Supported Platforms

| Platform | Operation Mode |

|---|---|

| IQ9075 | Ubuntu 24.04 |

Prerequisites

- IQ9075 Hardware

- Ubuntu 24.04 System

System Dependency Configuration

Configure AidLux Dependency Sources

# Download the correct public key

sudo wget -O- https://archive.aidlux.com/ubuntu24/public.key | gpg --dearmor | sudo tee /etc/apt/trusted.gpg.d/private-aidlux.gpg > /dev/null

# Edit the source file

sudo vim /etc/apt/sources.list.d/private-aidlux.list

# Fill in the private key provided by AidLux in the source file

deb [arch=arm64 signed-by=/etc/apt/trusted.gpg.d/private-aidlux.gpg] https://archive.aidlux.com/ubuntu24 noble main

# Update cache

sudo apt updateAfter the update is complete, you can obtain the official AidLux SDK dependencies using the following command:

sudo apt list | grep aid | grep unknown# Install software

# Must be installed first, not included in the system by default

sudo apt install python3 python3-pip libopencv-dev python3-opencv net-tools

# Must be installed before aidlite

sudo apt install aidlux-aistack-base aidrtcm

# Install aidlite and dependencies

sudo apt install aid-lms aidlms-sdk aidlite-sdk cmake

sudo apt-get install libfmt-dev nlohmann-json3-dev

sudo apt install aidlite-*

# Support DSP

sudo apt-get install qcom-fastrpc1

sudo apt-get install qcom-fastrpc-dev

# Install aidgen-sdk

sudo apt install aidgen-qnn240-sdk

# Install mms service

sudo apt install aid-mms

# Support GPU

sudo apt-add-repository -s ppa:ubuntu-qcom-iot/qcom-ppa

sudo apt install qcom-adreno-cl1

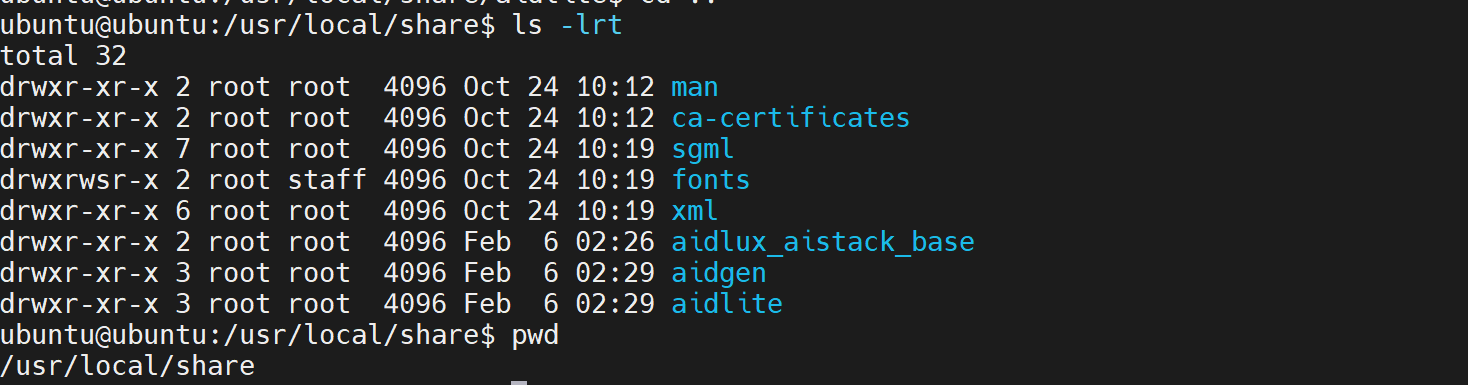

sudo ln -s /usr/lib/aarch64-linux-gnu/libOpenCL.so.1 /usr/lib/aarch64-linux-gnu/libOpenCL.soAfter installation, check that the /usr/local/share directory now includes the aidlite and aidgen folders.

Device Authorization

Obtain Device SN

cat /sys/devices/soc0/serial_numberObtain Authorization File

Provide the SN to AidLux technical personnel to generate a device-specific License file, and place it in the path /etc/opt/aidlux/license/AidLuxLics.

Activate Authorization

sudo /opt/aidlux/cpf/aid-lms/manager.sh restartAidGen Case Deployment

Step 1: Copy AidGen SDK Code Examples

# Copy test code

cd /home/ubuntu

cp -r /usr/local/share/aidgen/examples/cpp/aidllm .Step 2: Download Model Resources

Since HY-MT1.5-1.8B is currently in the Model Farm preview section, it needs to be obtained via the

mmscommand.

Using mms requires a Model Farm account login. Please visit Model Farm Account Registration

# Login

mms login

# Search for model

mms list HY

# Download model

mms get -m HY-MT1.5-1.8B -p w4a16 -c qcs8550 -b qnn2.36 -d /home/ubuntu/aidllm/hy-mt

cd /home/ubuntu/aidllm/hy-mt

unzip qnn236_qcs8550_cl2048.zip

mv qnn236_qcs8550_cl2048/* /home/ubuntu/aidllmStep 3: Create Configuration File

cd /home/ubuntu/aidllm

vim hy-mt-aidgen-config.jsonCreate the following json configuration file:

{

"backend_type": "genie",

"prefix_path": "kv-cache.primary.qnn-htp",

"model": {

"path": [

"hy-mt1.5-1.8b_qnn236_qcs8550_cl2048_1_of_2.serialized.bin.aidem",

"hy-mt1.5-1.8b_qnn236_qcs8550_cl2048_2_of_2.serialized.bin.aidem"

]

}

}Step 4: Confirm Resource Files

The file distribution is as follows:

/home/ubuntu/aidllm

├── CMakeLists.txt

├── test_prompt_abort.cpp

├── test_prompt_serial.cpp

├── aidgen_chat_template.txt

├── chat.txt

├── htp_backend_ext_config.json

├── hy-mt1.5-1.8b-htp.json

├── hy-mt-aidgen-config.json

├── kv-cache.primary.qnn-htp

├── hy-mt1.5-1.8b-tokenizer.json

├── hy-mt1.5-1.8b_qnn236_qcs8550_cl2048_1_of_2.serialized.bin.aidem

├── hy-mt1.5-1.8b_qnn236_qcs8550_cl2048_2_of_2.serialized.bin.aidemStep 5: Set Conversation Template

💡Note

Please refer to the aidgen_chat_template.txt file in the model resource package for the conversation template.

Modify the test_prompt_serial.cpp file according to the LLM template:

// test_prompt_serial.cpp

// ...

// line 43-47

std::string prompt_template_type = "hy-mt";

if(prompt_template_type == "hy-mt"){

prompt_template = "<|hy_begin▁of▁sentence|><|hy_place▁holder▁no▁3|>\n<|hy_begin▁of▁sentence|>\n<|hy_User|>Translate the following segment into Chinese, without additional explanation.\n\n{0}\n<|hy_Assistant|>";

}Step 6: Compile and Run

# Install dependencies

sudo apt update

sudo apt install libfmt-dev

# Compile

mkdir build && cd build

cmake .. && make

# Run after successful compilation

# The first parameter 1 indicates enabling profiler statistics

# The second parameter 1 indicates the number of inference loops

mv test_prompt_serial /home/ubuntu/aidllm/

cd /home/ubuntu/aidllm/

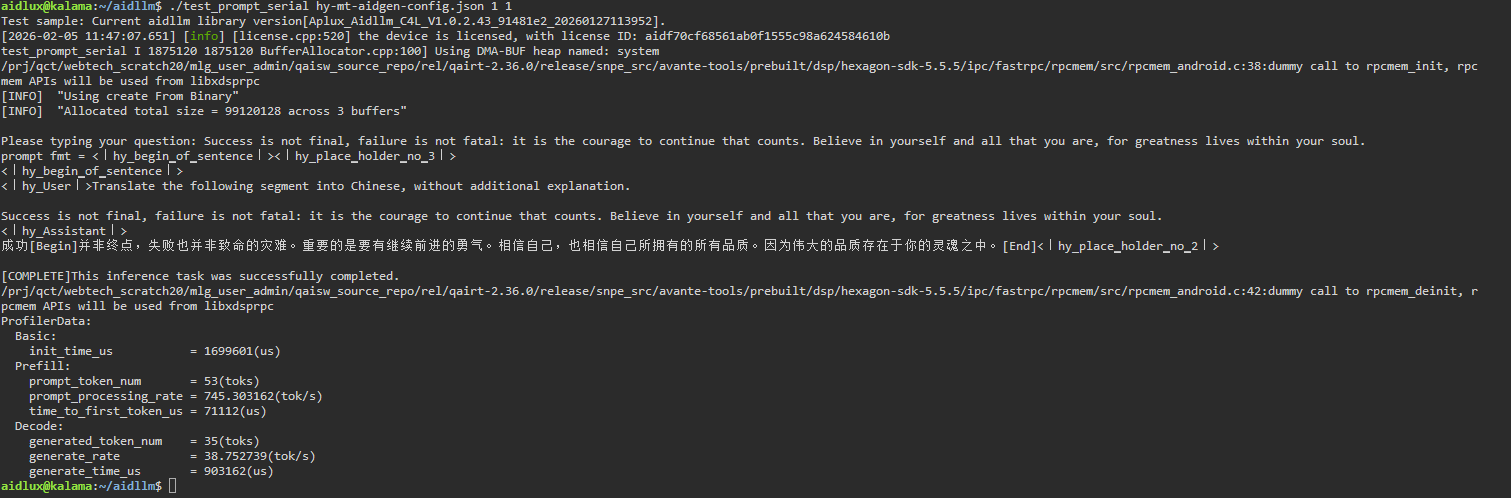

./test_prompt_serial hy-mt-aidgen-config.json 1 1- Enter the sentence you want to translate in the terminal.

💡Note

According to the conversation template settings in Step 5, the model is set for translation; it will automatically translate other languages into Chinese after input.